A data centric approach to patching systems with Ansible

936 words, 5 minutes.

When patching Linux machines these days I could forgive you for asking “how hard can it be?” Sure, a yum update -y will sort it for you in a flash…

But for those of us working with estates larger than a handful of machines it’s not that simple. Sometimes an update can create unintended consequences across many machines and you’re left wondering how to put things back the way they were. Or you might think “should I have applied the critical patch on its own and saved myself a lot of pain?”

Faced with these sorts of challenges in the past led me to build a way to ‘cherry-pick’ the updates needed and automate their application.

A flexible idea

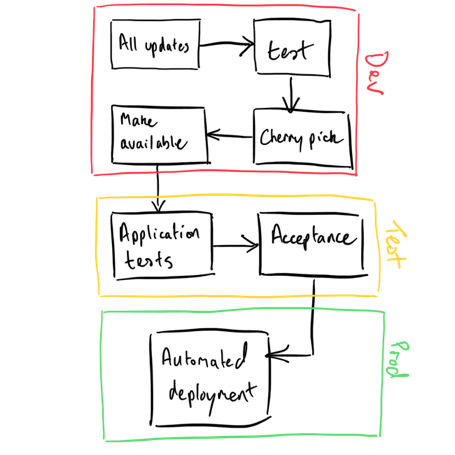

Here’s an overview of the process which we’ll apply technology to in a moment…

Under this system, we do not permit machines direct access to vendor patches. Instead, they’re selectively subscribed to repositories. Repositories contain only the patches required – although I’d encourage you to give this careful consideration so you don’t end up with a proliferation (another management overhead you’ll not thank yourself for making).

Now patching a machine comes down to 1) the repositories it’s subscribed to and 2) the ’thumbs up’ to patch. With variables controlling both subscription and permission to patch we need not tamper with the logic (the plays), we only need to alter data.

Here is an example Ansible role to fulfil both requirements. It manages repository subscriptions and has a simple variable to control running the patch command:

---

# tasks file for patching

- name: Include OS version specific differences

include_vars: "{{ ansible_distribution }}-{{ ansible_distribution_major_version }}.yml"

- name: Ensure Yum repositories are configured

template:

src: template.repo.j2

dest: "/etc/yum.repos.d/{{ item.label }}.repo"

owner: root

group: root

mode: 0644

when: patching_repos is defined

loop: "{{ patching_repos }}"

notify: patching-clean-metadata

- meta: flush_handlers

- name: Ensure OS shipped yum repo configs are absent

file:

path: "/etc/yum.repos.d/{{ patching_default_repo_def }}"

state: absent

# add flexibility of repos here

- name: Patch this host

shell: 'yum update -y'

args:

warn: false

when: patchme|bool

register: result

changed_when: "'No packages marked for update' not in result.stdout"Scenarios

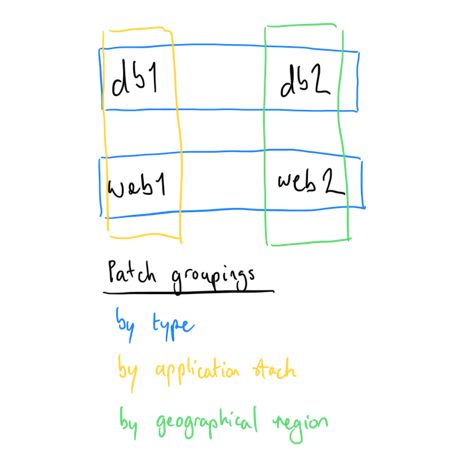

In our fictitious, large, globally dispersed environment (of four hosts) we have…

- Two web servers

- Two database servers

- An application, comprising one of each server type

OK, so the number of machines I’m showing here aren’t ’enterprise scale’, but remove the counts and imagine the environment as multiple tiered applications, dispersed geographically. What we want to do is patch elements of the stack; across server types, application stacks, geographies, or the whole estate.

Using only changes to variables, can we achieve that flexibility? Sort of. Ansible’s default behaviour for hashes is to overwrite. In our example the patching_repos variable for our db1 and web1 hosts gets overwritten by their later occurrence in our inventory. Hmm, a bit of a pickle. There are two ways to cater for this:

- Multiple inventory files

- Change the variable behaviour

I chose number one, because it maintains clarity. Once you start merging variables it’s hard to find where a hash appears and how it’s put together. Forcing yourself to use the default behaviour maintains clarity and is the method I’d encourage you to stick with, for your own sanity.

Get on with it then

Let’s run the play, focussing only on the database servers.

Did you notice the final step, ‘Patch this host’, says ‘skipping’? That’s because we didn’t set the controlling variable to actually do the patching. What we have done is set up the repository subscriptions, ready.

So let’s run the play again, limiting to the web servers, and tell it to actually do the patching. I’ll run with verbose output set, just so you can see the yum updates happening:

Patching an application stack has required another inventory file, as discussed above. Let’s run the play again:

Patching hosts in the European geography is the same ethos as the application stack, and we achieve it with another inventory file:

Finally, now all the repository subscriptions are configured, let’s just patch the whole estate. Note the app1 and emea groups don’t need the inventory here – we were only using the inventories for separation of repository definition and set up. They’re now configured, so the yum update -y patches everything. If you didn’t want to capture those repositories they could be configured as enabled=0:

Conclusion

The flexibility comes from how we group our hosts. But with the default hash behaviour we need to think about overlaps – the easiest way, to my mind at least, is with separate inventories.

With regards to repository set up, I’m sure you’ve already said to yourself, “ah, but the cherry picking isn’t that simple!” In this model there is additional overhead to download patches, test they work together, and bundle them with dependencies in a repository. With complementary tools you could automate the process, and in a large scale environment you’d have to.

There is a part of me drawn to just applying full patch sets as a simpler and easier way to go. Skip out the cherry picking part, and apply a full set of patches to a ‘standard build’. I’ve seen this approach applied to both UNIX and Windows estates in the past – with an enforced quarterly update.

I’d be interested in hearing your experiences of patching regimes, and the approach proposed here, via Mastodon.