Should we be polite to AI?

349 words, 2 minutes.

Elaine Moore recently wrote an article in the FT titled “We might be surprised by our reactions to generative AI”. It got me thinking about the way we ‘speak’ to generative AI, like ChatGPT.

Like many of the tech community, I’ve been using ChatGPT for most of this year. I’ve found it to be the search engine I wish we’d always had, and a fabulous co-pilot in so many tasks. As a distant former ‘hands on’ technologist, I’m very rusty in anything that involves writing code. Yet I’ve found myself wanting to automate problems away, or solve things in a simpler fashion (i.e. get a computer to do it). So I’ve turned to ChatGPT to help me write code, and I’ve found it incredibly helpful. It is a productivity booster for me.

I started out making my prompts terse, ‘search engine style’. But then I changed, commununicating with it like I would a person. This might seem a bit daft, but actually it feels better to me. Internally, the way I think about communicating with people is to be respectful and kind. I treat people the way I like to be treated.

I forget where I read it, maybe something by Kahneman or Thaler, but the quote “people’s behaviour is a product of the system they are part of”1 comes to mind. To that end, I see being kind on the inside as a precursor to being kind on the outside. Call me soft, but I anthropomorphise my dog with the greatest of ease, and so I feel like doing the same to an AI detracts nothing from how I think about communication.

Here’s a snippet of a ‘conversation’ I had with ChatGPT about analysing the stock market. Am I daft to use manners? What do you think? I welcome your thoughts via Mastodon or LinkedIn.

Update

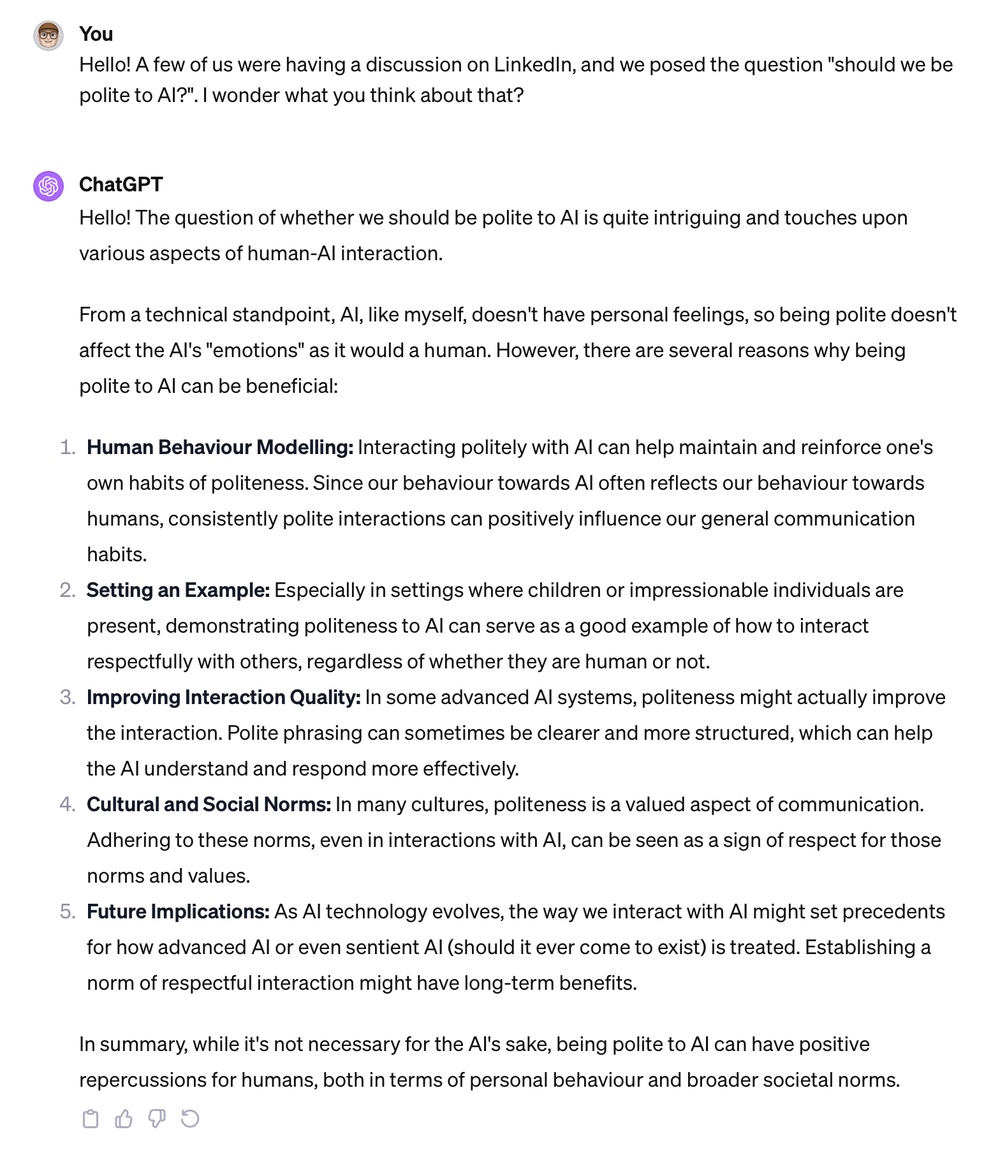

This sparked a bit of conversation on LinkedIn, with Stuart asking if I’d tried asking AI itself 😊 Fair point. So I did:

-

It’s probably a paraphrase of B F Skinner’s “environment determines behaviour” ↩︎